Color depth, in simple terms, refers to the number of bits used to represent the color of a single pixel. The greater the color depth, the more shades the TV can show, making the picture more detailed and saturated.

Common color depths for TVs include 8-bit and 10-bit. 8-bit color depth has more than 16.7 million shades, while 10-bit color depth has more than a billion shades. Even with such a big difference, the human eye can barely distinguish between an 8-bit and a 10-bit image. You can only see a difference if your TV supports HDR.

Moreover, you should know that the actual color depth of the TV depends on the brightness and other parameters, such as color gamut, contrast ratio, color accuracy, and so on.

In this article, we’ll journey through the technical aspects of color depth, explore its relationship with other TV features like brightness and HDR, and even peek into its future.

So let’s dive in!

What is color depth anyway?

As written above, TV color depth is the number of different shades of color that a TV can display. It is measured in bpp, bits per pixel. For example, an 8-bit color depth means the TV has 256 shades each of red, green, and blue to play with. 256x256x256 will give us 16.7 million possible colors.

Why is it counted like that? That’s because each pixel is made up of 3 subpixels: red, green, and blue (RGB). For example, each subpixel of a TV with 8-bit color depth can display 256 shades. In turn, a 10-bit color depth TV subpixel can display 1024 shades.

And the more shades, the smoother the transition from one color to another, allowing you to eliminate the banding effect.

Can human eyes actually spot the difference between 8-bit and 10-bit color depth? Essentially, it’s like trying to spot the difference between two very similar shades of blue. It can be quite noticeable in some scenes, especially those with smooth gradients like sunsets. But in others, it might be a tad subtler.

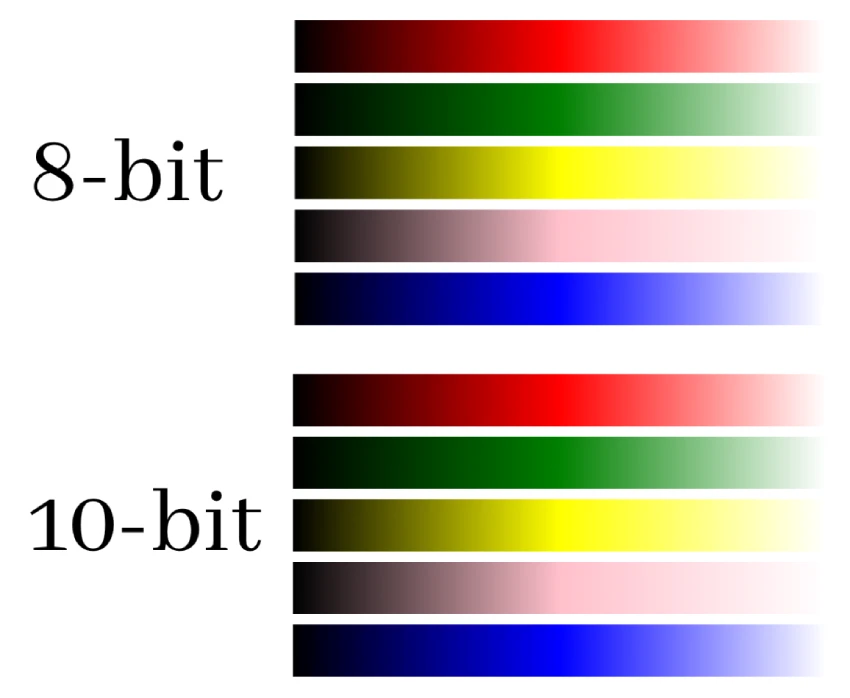

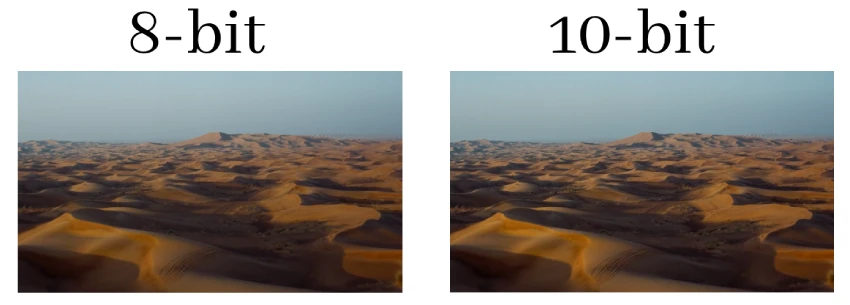

Look at the screenshot below.

As you can see, the difference between 8-bit and 10-bit is hardly noticeable.

What is color depth’s impact on a TV image?

The 10-bit signal does allow for more nuance in the colors displayed, making gradients smoother and reducing the likelihood of a “staircase effect” or banding in the image, especially in areas with smooth transitions such as sunsets or shadows.

If you have a display that can receive a 10-bit signal, but the content source or material you are viewing is not a 10-bit HDR, then you will not get the full benefits of this color depth. The image will be displayed with the source color depth (e.g., 8-bit).

However, when viewing 10-bit HDR content on a compatible display, the difference in image quality becomes noticeable, especially in high dynamic range scenes where details in dark and light areas become more visible, and colors become richer and more accurate.

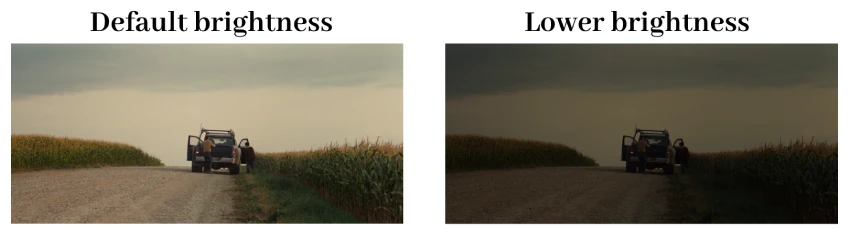

Here you can see the 8-bit frame in comparison to the 10-bit frame with HDR.

Now, let’s relate this to human perception. The human eye is estimated to distinguish between 2 to 10 million colors under typical conditions. And while this is less than TVs with 8-bit and 10-bit color depth can display, you’ll still be able to notice the difference between the two, as it is reflected in a smoother transition.

Bits vs. Colors: Understanding bit depth

In the context of displays and digital imaging, bit depth refers to the number of bits used to represent the color of a single pixel. This bit depth determines the number of possible colors that pixels can display.

8-bit

An 8-bit color depth means that each primary color (red, green, and blue) is represented using 8 bits. This results in:

- 2^8 = 256 possible shades for each primary color.

- When combining the three primary colors, the total number of possible colors is 256 x 256 x 256 = 16,777,216 colors.

10-bit

A 10-bit color depth allocates 10 bits for each primary color, leading to:

- 2^10 = 1,024 possible shades for each primary color.

- The total number of combined colors is 1,024 x 1,024 x 1,024 = 1,073,741,824 colors, which is over a billion possible colors.

12-bit

With a 12-bit color depth, each primary color is represented using 12 bits, resulting in:

- 2^12 = 4,096 shades for each primary color.

- The combined total is 4,096 x 4,096 x 4,096 = 68,719,476,736 colors.

How does color depth impact other parameters?

Color depth is only one parameter out of dozens that affect the TV picture. Brightness, contrast ratio, color gamut, color volume, and color accuracy – all interact with each other to produce a better picture quality. Let’s look below at how color depth affects and interacts with the other parameters.

TV brightness

Brightness, measured in nits, indicates the luminance or intensity of light emitted from a display.

Higher brightness levels can accentuate the distinctions between close shades, making the benefits of increased color depth more apparent. Conversely, the nuances between similar colors (afforded by greater color depth) may become less distinguishable at lower brightness levels.

The higher the brightness value of the TV (in nits), the more shades the TV will be able to transmit, the smoother the transition from one shade to another. For example, a TV with 100 nits brightness can only display 8-bit color, while a TV with 500 nits brightness can display 10-bit color.

In HDR displays, where brightness levels are significantly elevated, the advantages of higher color depth are especially pronounced, as the display can render subtle color variations even in intensely bright or dark scenes.

Also, you should be aware that as the brightness of your TV decreases, the colors will also lose their saturation and fade. However, the smoothness of the transition between colors will not be affected.

Color accuracy

Color accuracy pertains to a display’s ability to represent colors as they are intended or as they exist in the real world. It measures how closely the displayed colors match reference standards or original content.

A higher color depth enhances color accuracy by allowing for finer gradations of colors. This means subtle shades, which might be averaged out or omitted at lower color depths, can be precisely represented. As a result, images and videos appear more true-to-life, with fewer artifacts like banding in gradients.

In essence, while color depth provides the potential for detailed color representation, it’s the accuracy with which those colors are displayed that determines the fidelity to the original content.

Contrast ratio

The contrast ratio represents the ratio between the brightest white and the darkest black that a display can produce. It’s a measure of the display’s ability to showcase details in an image’s bright and dark areas.

A higher color depth contributes to a more nuanced representation of colors within the spectrum between the darkest and brightest points. This means that with increased color depth, the transitions between light and dark areas become smoother, and the display can represent more shades in between, enhancing the perceived contrast.

While contrast ratio sets the extremes of brightness and darkness, color depth determines the granularity and precision of color representation within those extremes.

Color volume

Color volume measures the range of colors a display can produce at varying brightness levels. It combines aspects of color gamut (the range of colors a display can show) with brightness levels to represent the total capability of a display in terms of color and luminance.

A higher color depth directly influences color volume by enabling the display to represent finer gradations within its color gamut at different brightness levels. With increased color depth, the display can showcase more nuanced colors across its entire luminance range, leading to a richer and more detailed color volume.

In essence, color depth provides the granularity of color representation, while color volume captures the full spectrum of these colors across all brightness levels.

Color gamut

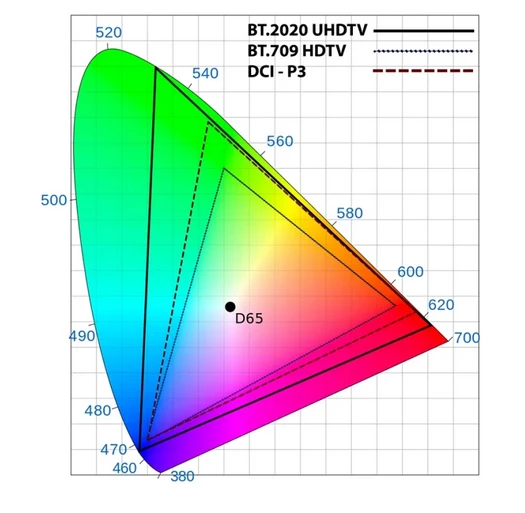

Color gamut defines the range of colors that a display can represent, typically referenced to a specific color space standard such as Rec.709, DCI-P3, or Rec.2020.

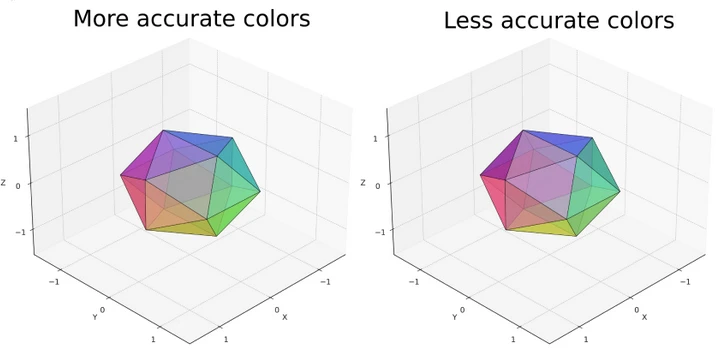

Here’s a color gamut (color range) in RGB format. Triangles show Rec.709, DCI-P3, and Rec.2020 color ranges:

An increased color depth enhances the precision within the defined color gamut. While the color gamut sets the outer boundaries of color representation, color depth determines the subtlety and granularity of colors within those boundaries.

Thus, a higher color depth ensures that the display can accurately represent the nuances and variations of colors within its gamut, reducing artifacts like banding.

Do 12-bit TVs actually exist?

Today, 12-bit color technology in TVs remains more of a theoretical possibility than a reality. While standards such as Dolby Vision offer support for up to 12-bit color at 10,000 nits of brightness, most modern TVs can’t even come close to reaching that brightness to reproduce such a wide range of shades fully.

Even in high-end models, like the Samsung S95C, brightness rarely exceeds 4,000 nits, making full use of 12-bit color unattainable.

Color depth on LED TVs vs. OLED TVs: Comparison

LED TVs:

Traditional LED TVs use a backlighting system to illuminate the liquid crystal cells that determine color reproduction. Although modern LED TVs can achieve 10-bit color depth, the backlighting system can sometimes limit their color depth. The uniformity of the backlight can affect how colors are displayed, especially in darker scenes.

To improve image quality, Samsung experimented with new quantum dot materials. These quantum dots promised improved color reproduction and brightness compared to traditional LED materials. However, despite the advantages of quantum dots, they could not match the image quality provided by OLED technology.

OLED TVs:

OLED displays have individual organic compounds that emit their own light, eliminating the need for a backlight. This allows OLED TVs to achieve true blacks by simply turning off individual pixels. The inherent technology of OLED screens allows for superior color depth and contrast ratios. OLED TVs typically offer 10-bit color depth. And turning off the pixels gives them the advantage of displaying darker shades smoother than LED TVs.

In Conclusion:

Both LED TVs and OLED TVs can offer excellent color depth, especially in their high-end models. However, the inherent technology differences mean that OLEDs often have an edge in terms of contrast and true black levels, which can enhance perceived color depth. On the other hand, LED TVs, especially QLEDs, can shine in terms of sheer brightness, which can be beneficial for viewing in well-lit rooms and for certain HDR content. The choice between them often comes down to viewing environment, content preferences, and budget.

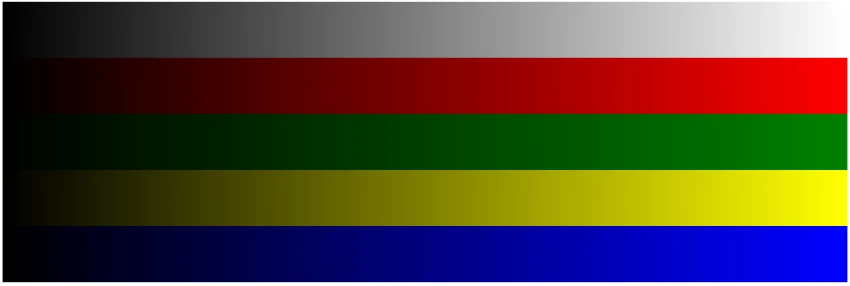

How to check the TV’s color depth

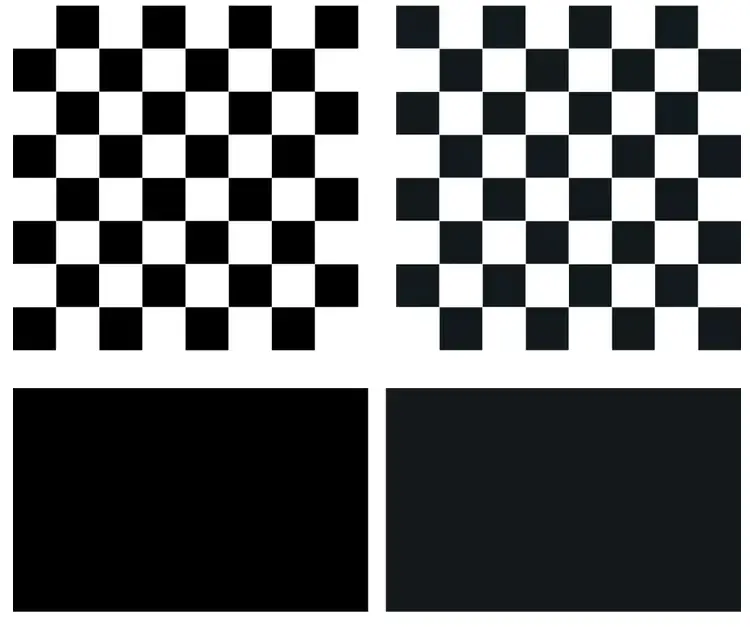

Experts use a test pattern to check the color depth of your TV. There are specialized test images designed to test color depth. They look similar to the one you can see in the photo below.

This pattern shows colors transitioning from their darkest to brightest shades.

To begin the test, experts connect a laptop to the TV with an HDMI cable and display a similar test pattern on the TV screen.

First, they look closely to see how smoothly the colors change. If there are smooth transitions with no abrupt changes in color, banding, or lines, the TV is most likely 10-bit. However, this is a paramount view.

For a more accurate assessment, experts use special equipment like a colorimeter, a device designed to measure the color of light. They point a colorimeter at the TV screen and connect it to a laptop.

Pointed at the pattern, the colorimeter captures the color values displayed on the TV. This data is then processed by specific software, which calculates the standard deviation for each color.

The standard deviation serves as a metric to determine how closely the displayed image matches the original test pattern. A lower standard deviation indicates a more accurate representation of the color. A result of less than 0.12 indicates a TV with excellent color accuracy and depth.